If you have any basic experience within IT Security, you’re likely to have heard of Phishing. It is one of the longest standing, most effective and easiest to pull off hacker techniques there is. Although there are usually various detections in place, often a few emails will still manage to slip through, hoping to catch out unwary recipients.

I am a Cyber Security Analyst for Bridewell, and I have been recently getting to grips with using the Azure platform for security and events management. As an additional necessity I have been learning to use Microsoft’s own Kusto Query Language, or KQL. As a proactive SOC we are always looking into ways to improve our detections, as if we cannot detect something, we cannot respond to it.

I was recently tasked with looking into a way to expand upon our existing phishing detections, and this led me to a great blog by Stuart Gregg on the matter.

Although Stuart provides a great initial query here, I found when running this against our logs there were huge numbers of false positives. I then decided to start adding some context around known events and senders as suggested, and found the overall query started getting more complex.

As someone still getting to grips with KQL (and query languages in general), there was a large amount of troubleshooting involved until I was able to produce the results I wanted.

My hope with this blog is to clearly explain my logical steps for each addition, so that others in my position can take away some basic KQL knowledge and apply this to their own advanced detection. I will provide screenshots of every addition and aim to bullet point through the KQL. If you have established KQL knowledge some of these steps and operators may be overexplained, but I hope that the resulting query is still of some inspirational use.

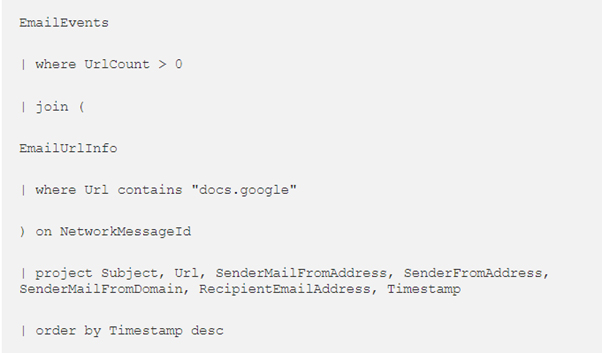

To begin with, here’s the provided initial framework:

This query searches through the EmailEvents table for anything containing more than 0 URLS, then the EmailUrlInfo table for any matches on the specified URL (in this case docs.google) and joins the results together on their mutual NetworkMessageId to ensure they are from the same email. I will explain the join operator further on. The final part establishes which columns to display in the results and how to order them.

Although this was a great starting off point, the vast number of false positive results returned from running the query indicated that I would need to make some additions.

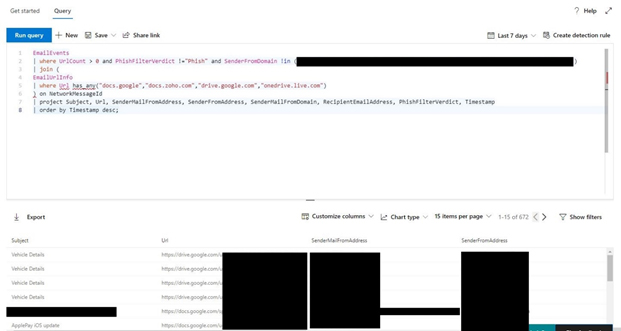

The first things that initially seemed worth adding were:

Exclusions of any results that were already detected by the phish filter anyway

Adding a list of approved senders

Adding more interesting potential phishing URLs

The earliest additions started to look something like this:

Blogs

Blogs